Intro

AI systems deployed in regulated industries operate under binding constraints where data handling, decision traceability, and model behavior are subject to compliance oversight, not operational preference. In financial services, healthcare, and government, these systems support credit risk assessment, clinical decision support, and regulatory reporting, functions where model errors carry legal, financial, and reputational consequences. In these environments, traceability and reliability are not aspirational standards, but rather, audit-enforceable requirements that govern every stage of the AI development lifecycle.

Building AI models capable of operating in regulated environments demands more than technical expertise; it requires a data infrastructure designed from the outset around compliance, auditability, and controlled access. Data infrastructure must enforce the policy boundaries, access controls, and documentation standards that regulated deployment environments legally mandate. Data partners like Welo Data provide the governed annotation, evaluation, and lifecycle oversight infrastructure organizations need to develop AI systems that meet regulated industry requirements.

Data Infrastructure as a Governance Layer

In regulated sectors, data pipelines function as a core component of AI governance. Training datasets often contain sensitive financial records, medical documentation, or proprietary operational information. Without structured controls, these datasets can introduce compliance risk or compromise confidentiality.

Secure data infrastructure addresses this challenge by implementing controlled data access, structured annotation environments, and verifiable audit trails. Every stage of the data lifecycle, from collection to annotation and evaluation, must be documented and traceable.

This approach positions data infrastructure as an active governance layer, enforcing policy boundaries, maintaining audit accountability, and sustaining compliance alignment across the full AI development lifecycle.

Managing Sensitive Data During Model Development

Developing AI models for regulated industries requires data handling protocols that enforce confidentiality, limit exposure, and maintain the audit trails that compliance frameworks demand. Annotation teams may interact with data containing personally identifiable information, confidential transactions, or legal records.

To reduce exposure, organizations often implement controlled workspaces, role-based access permissions, and anonymization procedures. Synthetic data generation extends training coverage by introducing controlled edge-case scenarios and compliance-sensitive conditions without exposing actual records, preserving both data utility and confidentiality requirements.

These controls contain the compliance exposure of distributed annotation operations while preserving the data representativeness that production model performance requires.

Structured Annotation and Human Oversight

In regulated environments, training data quality directly determines whether AI systems meet the performance and accountability thresholds that compliance frameworks require, making annotation governance a primary risk control. Annotation pipelines must operate under documented guidelines and structured quality control mechanisms that enforce consistency, support audit review, and reduce the labeling variance that degrades model reliability.

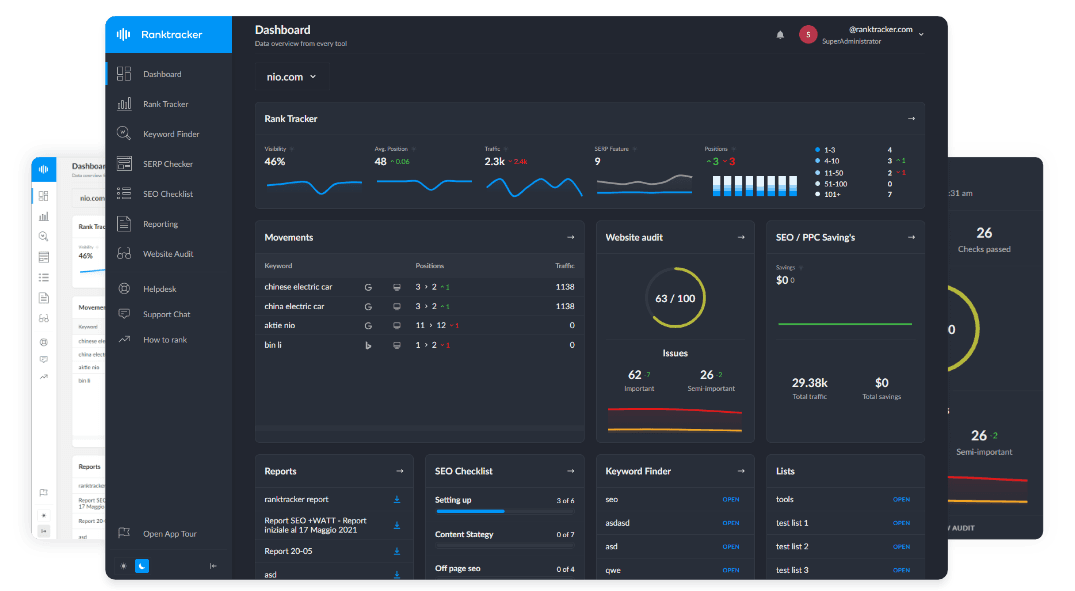

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Reviewer hierarchies, consensus scoring, and benchmark task calibration enforce labeling consistency across distributed annotation teams, reducing the variance in training signals that produces classification instability in production. Continuous evaluation pipelines compare model outputs against curated benchmark datasets and edge-case simulations to detect performance degradation before deployment thresholds are breached. Escalation protocols route ambiguous or high-stakes labeling decisions to domain specialists, ensuring that classification boundaries align with regulatory and operational requirements.

Human-in-the-loop review integrates domain specialist judgment into the evaluation pipeline, validating that training data and model outputs meet the regulatory standards that automated quality checks cannot fully assess.

Governance Integration Across the AI Lifecycle

Secure data infrastructure must integrate with lifecycle governance systems that connect annotation, evaluation, and model refinement under a unified oversight framework that preserves compliance continuity and maintains a verifiable development record.

Mature AI development environments integrate QA loops, annotator calibration sessions, monitoring dashboards, and periodic dataset reviews into a continuous oversight structure that detects compliance drift before it affects deployed model behavior. This oversight structure ensures dataset evolution remains aligned with regulatory constraints throughout model development.

Monitoring tools track performance signals across deployment environments, providing early detection of model behavior changes that may indicate data drift, distributional shift, or emerging compliance exposure. When performance degradation is detected, targeted dataset updates and structured fine-tuning cycles restore operational thresholds, closing the refinement loop within the governed lifecycle framework.

Supporting Reliable AI Deployment

Organizations operating in regulated environments cannot treat data governance as an implementation afterthought: the compliance, traceability, and access control requirements of these sectors must be engineered into the data infrastructure from the outset. Governed data pipelines, secure annotation environments, and continuous monitoring provide the structural rigor that regulated AI deployment requires, sustaining reliability and compliance accountability across the full operational lifecycle.

Platforms that integrate annotation governance, structured evaluation, and continuous monitoring enable organizations to build AI systems that meet both performance thresholds and regulatory accountability standards at deployment scale.

Conclusion

AI systems used in regulated industries must meet rigorous security standards, traceability, and operational reliability. Achieving this requires a data infrastructure that functions as a governance system throughout the AI lifecycle.

By integrating secure data management, human oversight, and structured evaluation processes, organizations reduce deployment risk while maintaining consistent model performance. In regulated environments where accountability is non-negotiable, governed data infrastructure provides the operational foundation for dependable, audit-ready AI systems.