Intro

If you’re an advanced user comparing Claude and GPT-4, you’re probably not asking which one writes nicer blog intros. You care about raw reasoning quality, technical correctness, long-context behavior, output limits, and how reliably the model can operate inside real engineering workflows.

This guide compares Claude and GPT-4 through that lens. It also explains a practical reality in 2026: “GPT-4” often refers to a family of successors and compatibility endpoints, while the most capable OpenAI options for technical work are typically the newer GPT-4.1/GPT-5-class models. Still, many teams and power users keep GPT-4 in the conversation because of legacy behavior, predictable formatting, and established integrations.

Overview of Both Tools

What Is Claude?

Claude is built by Anthropic. In 2026, Anthropic’s frontier models (for example, Claude Opus 4.6 and Sonnet 4.6) are explicitly positioned around careful planning, strong coding performance, and extremely large context windows—up to a 1M token context window in beta for select tiers and organizations. (anthropic.com)

Claude tends to shine when you need:

- Long-context reasoning over large codebases or documents

- Structured, deliberate analysis

- Strong code review and debugging behaviors in complex projects (anthropic.com)

What Is GPT-4?

GPT-4 is OpenAI’s earlier “frontier” generation model that became widely available via the OpenAI API and, historically, in ChatGPT experiences. OpenAI has since introduced newer families (including GPT-4.1 and GPT-5-class models), and has also run deprecation cycles for certain GPT-4 variants such as gpt-4-32k. (developers.openai.com)

For advanced users, GPT-4 is often evaluated on:

- Reasoning stability on complex tasks

- Code generation and refactoring

- Tool-calling patterns (depending on the endpoint)

- Compatibility with older prompts and existing pipelines

Feature Comparison

Raw Reasoning and “Thinking Style”

Claude’s best models are optimized to plan more carefully and sustain long, multi-step tasks—particularly in code-heavy environments. Anthropic explicitly frames Opus 4.6 improvements around careful planning and reliability in larger codebases. (anthropic.com)

GPT-4’s reasoning quality is still strong, but in 2026 the “raw reasoning ceiling” many developers want is more commonly associated with newer OpenAI offerings (like GPT-4.1 or GPT-5-class models). If you’re strictly comparing “Claude vs GPT-4,” you’re comparing a current frontier Claude to an older OpenAI generation in many real deployments.

Practical takeaway: for multi-step technical work, Claude often feels more deliberate; GPT-4 often feels more concise and prompt-sensitive, with behavior that varies more depending on which exact GPT-4 variant/endpoints you’re using.

Context Window and Token Limits

This is one of the biggest differences for advanced workflows.

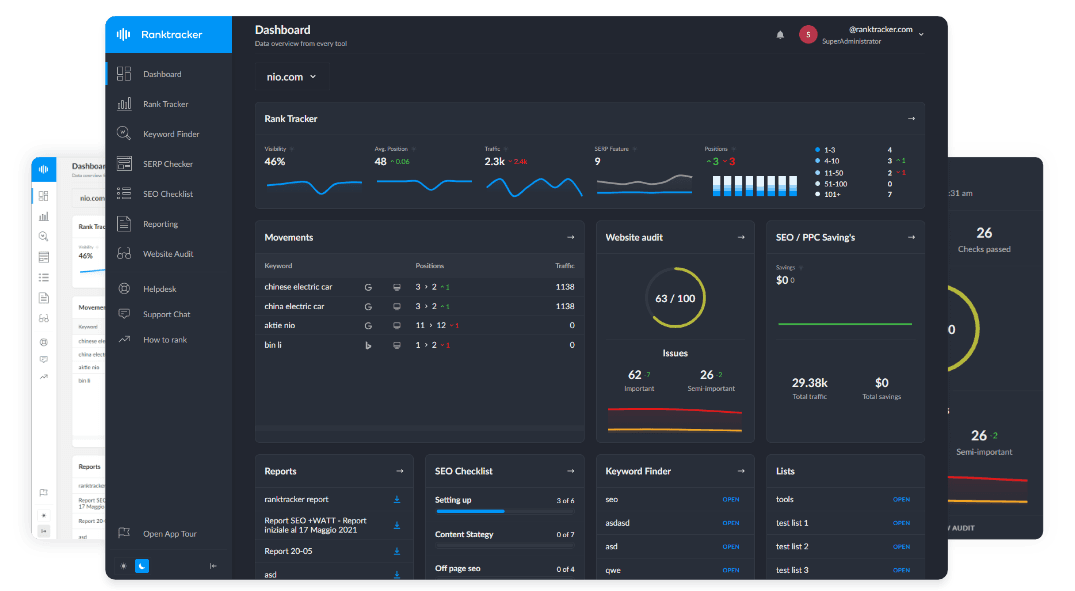

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Claude:

- Supports a 1M token context window (beta) on specific Claude models, with access gated by usage tier/custom limits. (platform.claude.com)

GPT-4:

- Some GPT-4 variants (notably gpt-4-32k) have been on a deprecation path, with continued access limited to existing users after the cutoff. (developers.openai.com)

- In practice, many teams moved to newer OpenAI models for large-context needs (for example, GPT-4.1 is documented with a ~1M token context window). (developers.openai.com)

Practical takeaway: if your “advanced user” work involves whole-repo ingestion, large diffs, long logs, or multi-document reasoning, Claude’s 1M context option (where available) is a direct advantage. If you need OpenAI with very large context, you typically end up on GPT-4.1/GPT-5-class rather than legacy GPT-4. (developers.openai.com)

Technical Output Quality

Both can produce high-quality code, but they behave differently:

Claude is frequently strong at:

- Codebase-aware refactors (when you provide enough repo context)

- Explaining tradeoffs clearly

- Systematic debugging narratives

GPT-4 is frequently strong at:

- Quick implementation drafts

- Familiar framework patterns

- Shorter iteration loops

One important nuance: output quality is often constrained less by “model intelligence” and more by output token ceilings, your tooling, and whether you’re using diff-based workflows. OpenAI explicitly emphasized diff-format reliability and higher output token limits for GPT-4.1 relative to prior generations. (openai.com)

Practical takeaway: if you need large-file rewrites or long code outputs, make sure you’re not silently bottlenecked by output limits or your wrapper’s truncation rules.

Performance Comparison

Long-Horizon Tasks

Claude is built to sustain longer agentic/extended tasks (especially with large context), which matters for:

- Multi-module refactors

- Migration planning

- Reviewing large PR sets

- End-to-end architecture changes

This aligns with Anthropic’s positioning for Opus-class upgrades. (anthropic.com)

GPT-4 can do long-horizon tasks too, but many teams now reach for newer OpenAI models if they want longer context and more modern tool-calling patterns. (developers.openai.com)

Reliability Under Constraint

In advanced usage, “reliability” often means:

- Lower hallucination rate in technical explanations

- Stable formatting across long outputs

- Consistent adherence to constraints (schemas, lint rules, diff-only output)

Claude tends to be cautious, sometimes at the cost of being overly conservative. GPT-4 tends to be more willing to “fill in gaps” if your prompt is underspecified—useful for speed, risky for correctness.

Practical takeaway: if correctness matters, you should assume both models can be confidently wrong and build verification into the workflow (tests, type checking, linters, and real-world validation).

Pricing Breakdown

Pricing changes frequently, but a safe way to think about it is cost-per-output at the quality level you need.

Claude:

- Anthropic lists Opus 4.6 pricing starting at $5 per million input tokens and $25 per million output tokens. (anthropic.com)

OpenAI:

- OpenAI’s current pricing pages highlight newer models (for example, GPT-4.1 pricing) rather than “GPT-4” as the headline choice, which reflects the broader shift away from legacy GPT-4 in modern deployments. (openai.com)

Practical takeaway: if you are still using GPT-4 endpoints for production, validate whether the “true” best comparison is Claude vs GPT-4.1 (or Claude vs GPT-5-class) based on what you can actually deploy at scale.

Best For: Use Case Segmentation

Claude is best for

- Very large-context work (repo-scale reasoning, massive documents) (platform.claude.com)

- Careful planning and structured debugging

- Code review and architecture-level analysis

GPT-4 is best for

- Legacy prompt compatibility and established pipelines

- Short-to-medium technical tasks where speed and iteration matter

- Workflows where you already tuned prompts specifically for GPT-4’s behavior

If you’re greenfielding an advanced workflow in 2026, consider whether you really mean GPT-4 (legacy) or OpenAI’s newer technical stack (GPT-4.1/GPT-5-class). (developers.openai.com)

SEO-Specific Section for Advanced Users

Advanced users often use AI for SEO in a very different way than beginners: not “write me an article,” but “build me a system.”

Which is better for keyword research?

Neither Claude nor GPT-4 has first-party access to live keyword databases. They can generate:

- Topic clusters and semantic variations

- SERP intent hypotheses

- Content briefs and internal linking structures

But they cannot reliably validate search volume, difficulty, or whether a keyword is worth targeting right now.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

A professional workflow is:

Use AI to generate content ideas and outlines → Validate keywords in Ranktracker → Track Top 100 positions daily.

That last step is what makes the workflow real: you move from plausible content to measurable performance.

Which produces more rankable content?

“Rankable” content comes from:

- Correct intent matching

- Entity and subtopic coverage

- Competitive SERP alignment

- Iteration based on ranking movement

Claude’s structured approach can help produce cleaner briefs and tighter logic. GPT-4’s legacy behavior can be great for consistent formatting if your team already has prompt libraries tuned for it.

But neither model guarantees rankings. Rankings come from an iteration loop that includes validation and tracking.

Verdict

For advanced users, Claude vs GPT-4 is less about brand preference and more about constraints:

- If you need massive context and long-horizon technical work, Claude’s 1M context option (where available) is a major advantage. (platform.claude.com)

- If you’re comparing “best OpenAI technical capability in 2026,” the practical comparison is often Claude vs GPT-4.1 or Claude vs GPT-5-class—because OpenAI’s own docs and pricing emphasize these newer models and GPT-4 variants have been in deprecation cycles. (developers.openai.com)

If you are sticking to GPT-4 specifically for compatibility reasons, GPT-4 can still be a strong choice. But if you’re optimizing for maximum reasoning + long context + technical output in 2026, Claude is frequently the more direct fit—unless you move up the OpenAI stack to GPT-4.1/GPT-5-class.